The AI Bubble: Is NVIDIA Just Recycling Its Own Money?

The New Gold Rush

The California Gold Rush left a long-lasting impact on the United States (i.e. US), and is widely considered the most significant event of the first half of the nineteenth century. People from as far as the Ottoman Empire, nowadays Turkey, and substantial portions of North Africa and Eastern Europe, flocked to the US to capitalize on this ‘golden’ opportunity. In 1848, over 300,000 people, about 0.03% of the world's population, headed to the U.S. in search of gold. Most didn’t strike gold; instead, they struck coal and left empty-handed.

Most prospectors didn’t make substantial amounts of money; the merchants who sold them shovels and food made the money. One of the best-known examples is Levi Strauss, a Bavarian immigrant who sold sturdy denim workwear to gold seekers passing through San Francisco. The name remains globally relevant today, as Levi Strauss & Co. reported net revenue of about $6.36B in 2024.Today, California is experiencing a new kind of gold rush, one driven by the race to build and deploy artificial intelligence (i.e. AI). But the real question is: is this “pot of gold” even more elusive, yet potentially far larger? And more importantly, will this rush shape civilization’s long-term future, or will it fade as just another short-lived boom?

Is AI in a bubble?

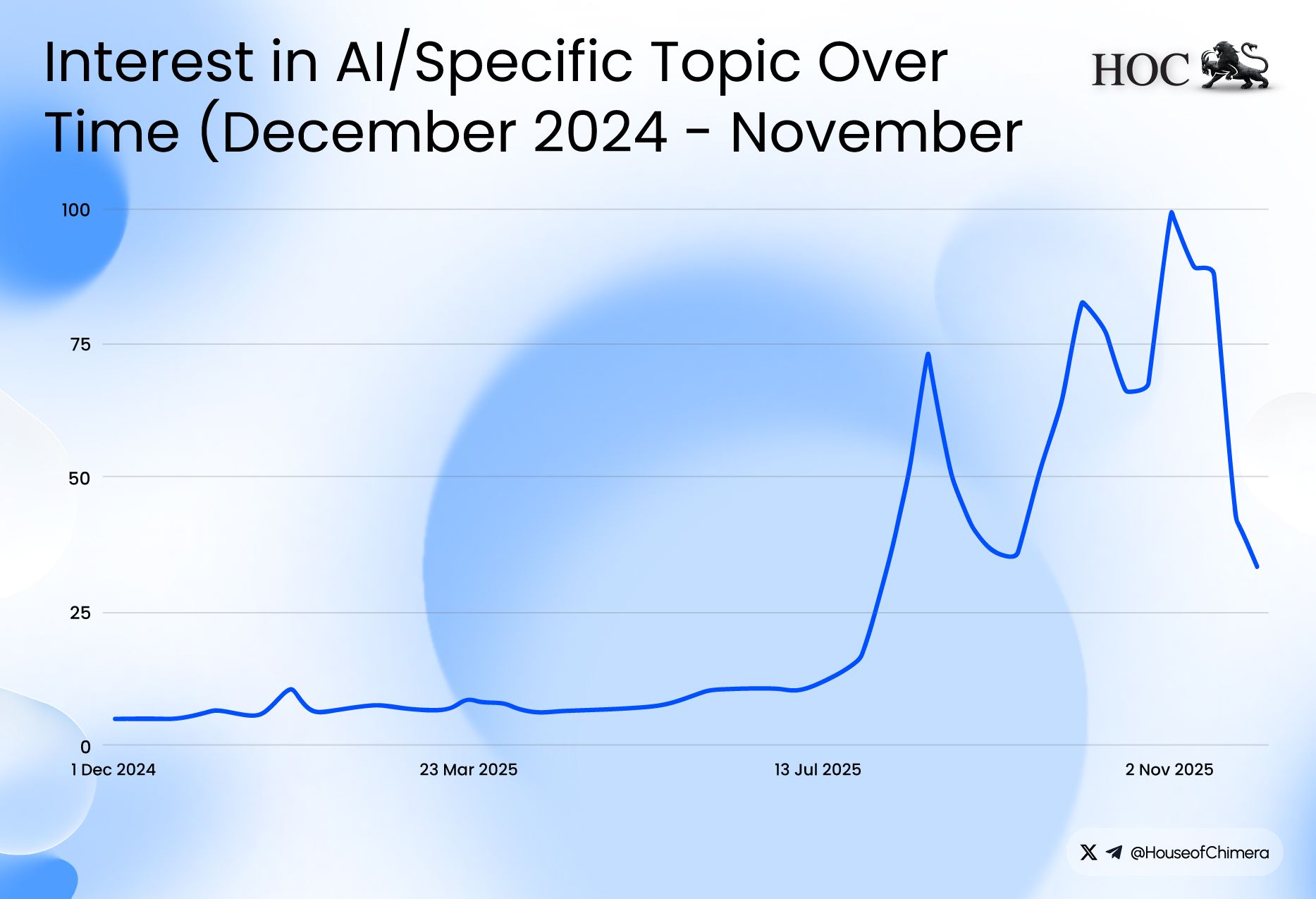

The number of voices claiming that AI might be in a bubble has exploded in the last few weeks, according to Google Trends. Multiple key institutions have argued that AI exhibits bubble-like characteristics, including the Bank of England, Deutsche Bank, and the International Monetary Fund (IMF). The concern stems from the significant increase in the value of AI-related companies over the last year, as investment continues to flow in.

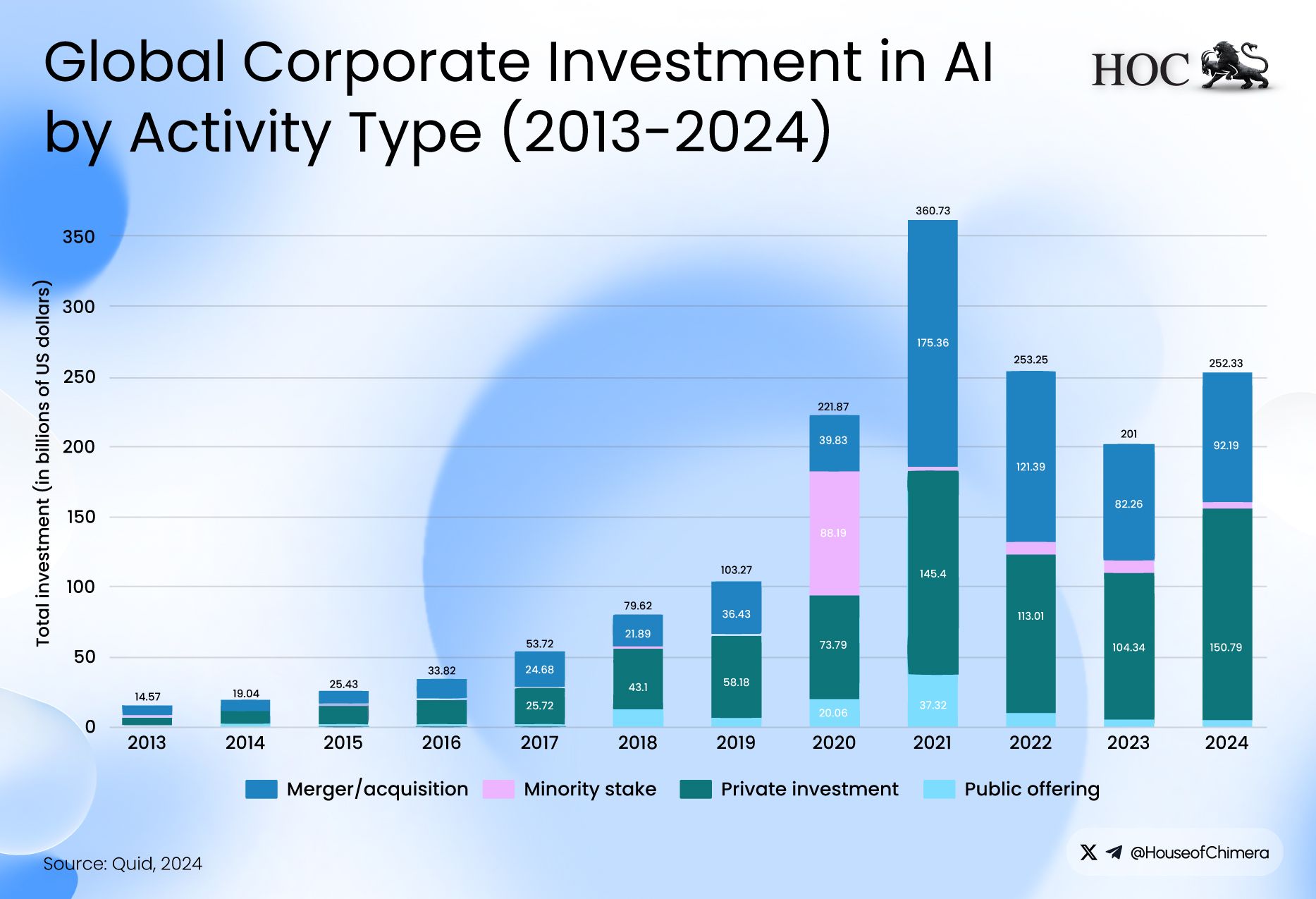

According to UBS, global annual AI spending is expected to swell to 375 billion USD, a significant increase over the last year. This makes you wonder, who is spending so much capital on AI? Well, first of all, it’s venture capital that is flowing into AI deals, but more importantly, it mainly stems from a couple of companies: NVIDIA, OpenAI, Oracle, Amazon, and Anthropic.

The Circular Economy of AI

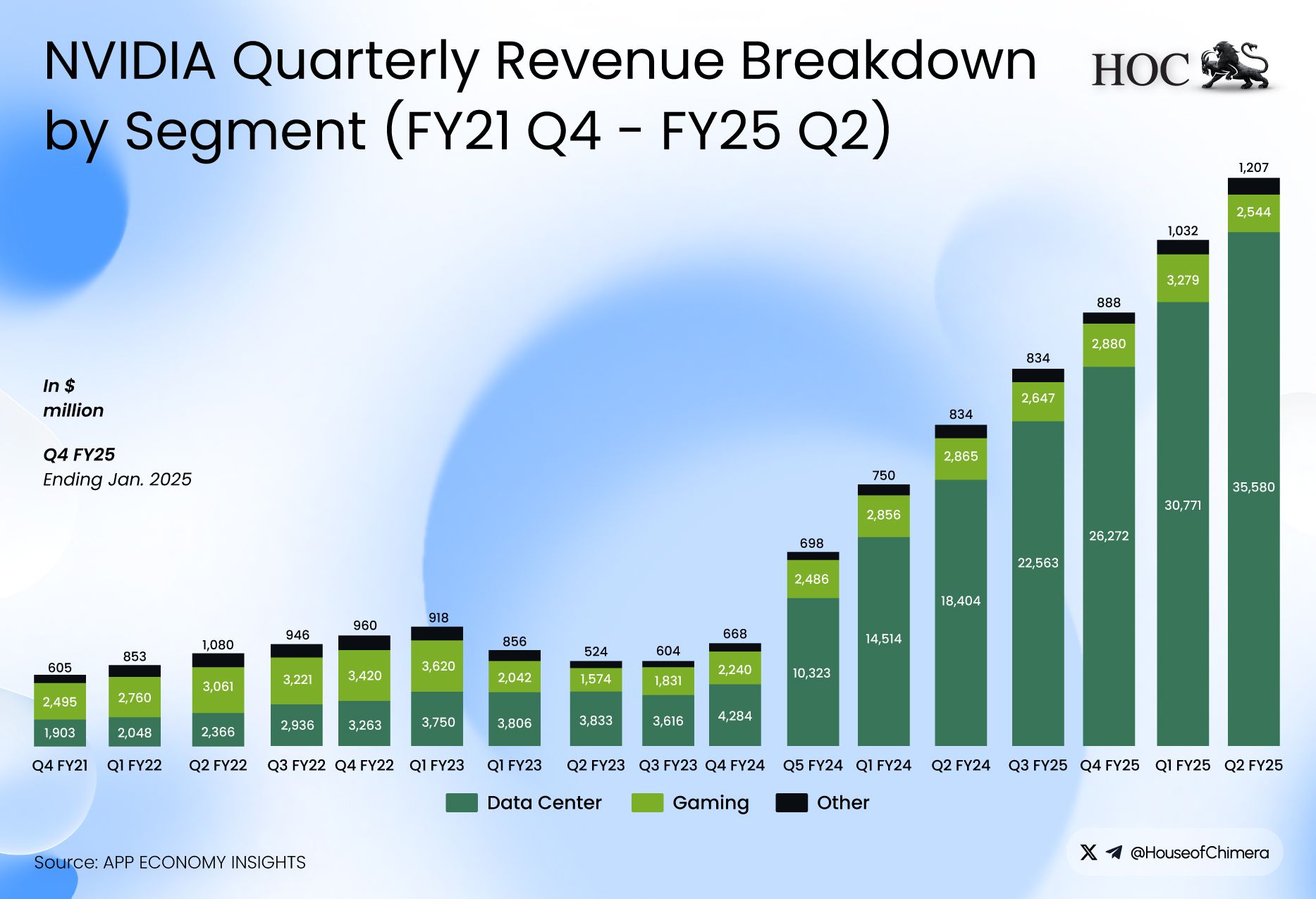

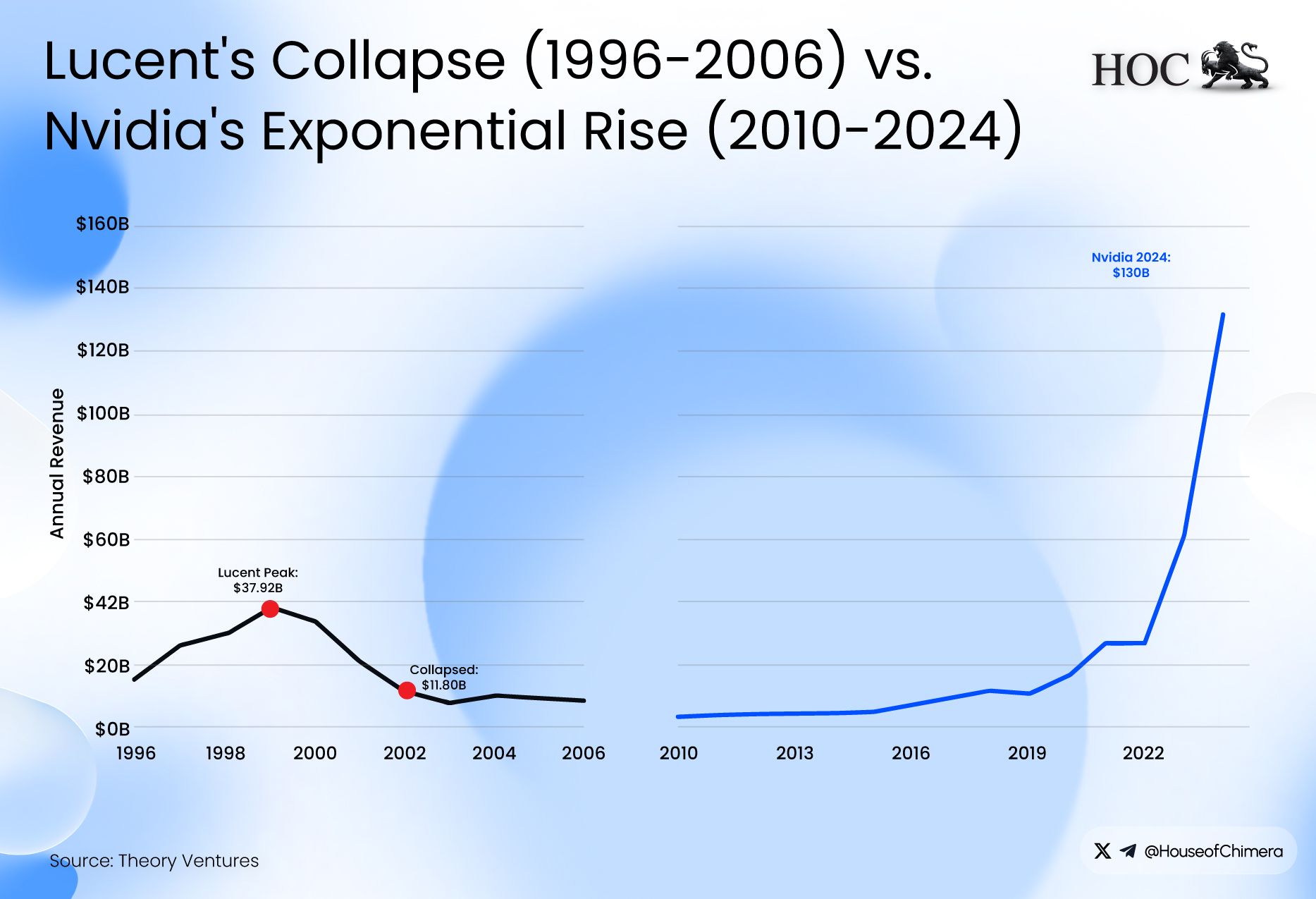

NVIDIA, the world's largest Generative Processing Unit (i.e. GPU) manufacturer, has gone through an exceptional 2-year cycle, as the total operational revenue nearly doubled, which is predominantly being driven by its data center revenues. The increasing demand for compute drives these revenues, as according to Jensen Huang, Founder and CEO of NVIDIA, “Blackwell sales are off the charts, and cloud GPUs are sold out,” and “The AI ecosystem is scaling fast - with more new foundation model makers, more AI startups, across more industries, and in more countries. AI is going everywhere, doing everything, all at once”. NVIDIA’s success is hard to dispute: it’s essentially the Levi Strauss of AI, only, instead of denim workwear, it sells the compute that powers today’s AI models.

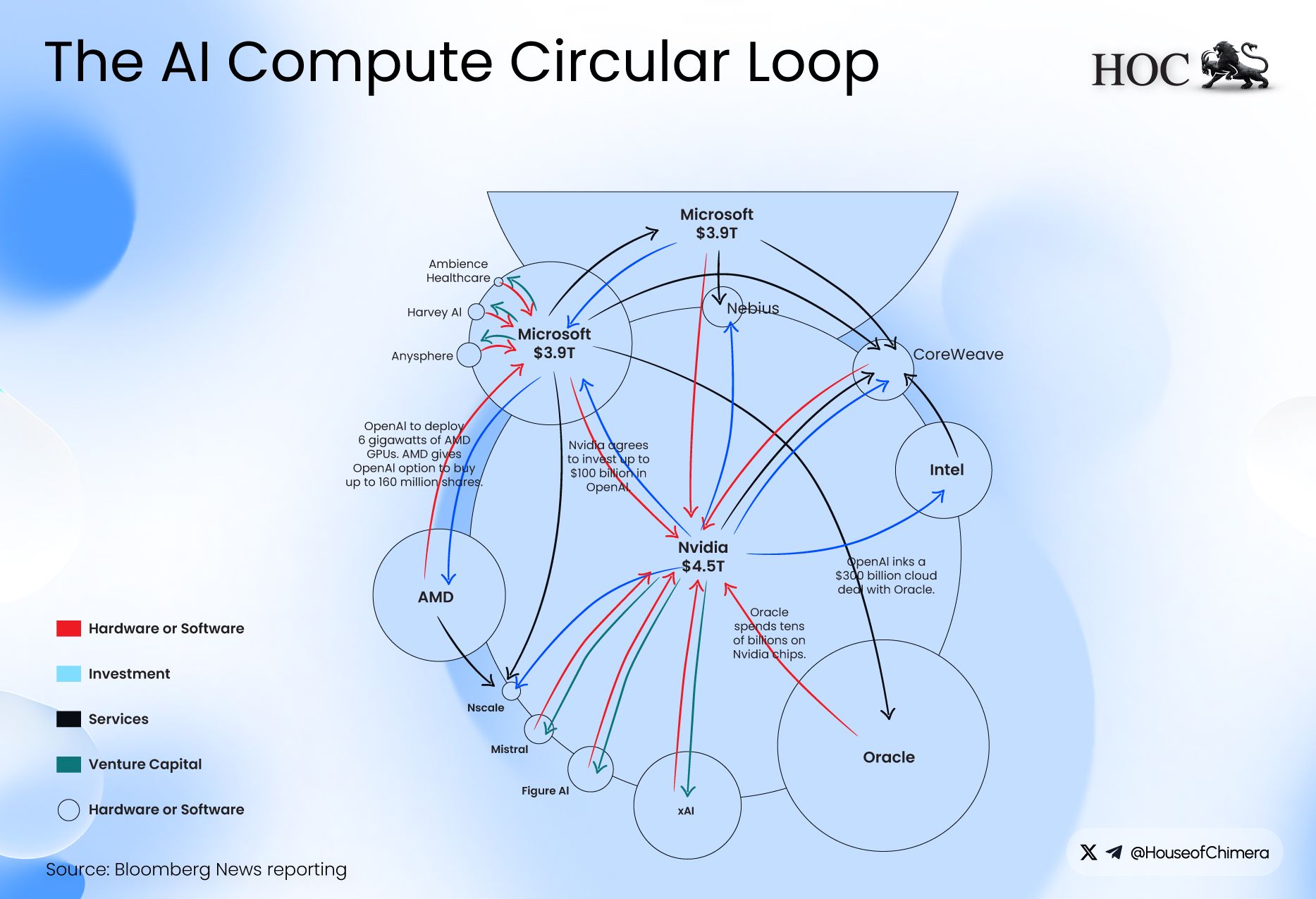

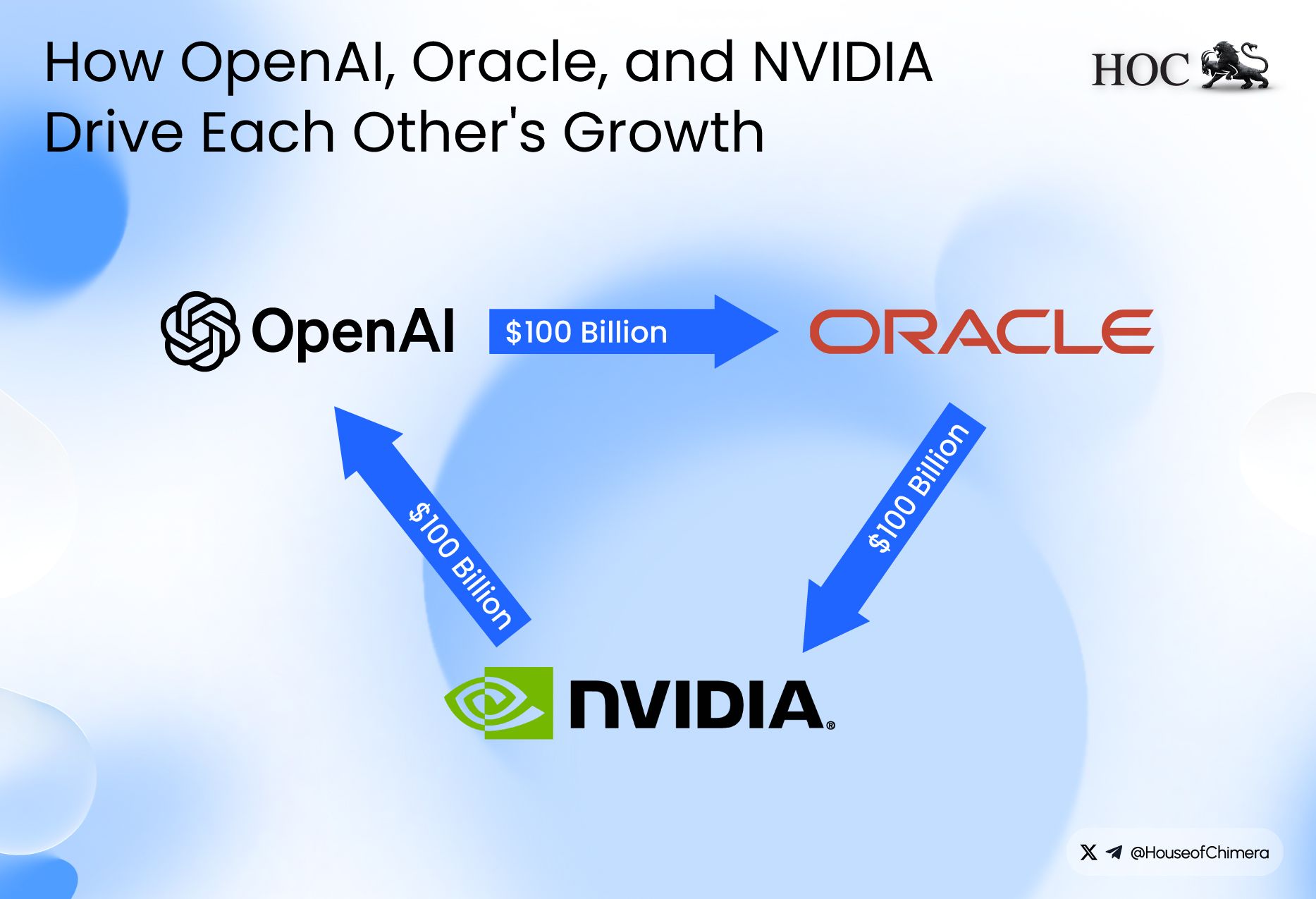

One of the most potent forces in the AI space is undoubtedly OpenAI, as the company is currently valued at a staggering 500 billion USD, making it as valuable as Mastercard. Yet, it has lower revenues and a significantly higher operating deficit. It is estimated that OpenAI currently generates about 20 billion USD in revenue while incurring an operational loss of 70 billion USD. While keeping these numbers in our minds, it makes you wonder how OpenAI can fulfill its significant deal spree, as OpenAI has inked multiple deals, including one of 300 billion USD with Oracle, as it agreed to spend 300 billion USD to purchase data centre capacity from Oracle to fulfill its computing demand for its AI models. In its place, Oracle has bought NVIDIA GPUs for its data centre. To complete the circle, NVIDIA has invested in OpenAI, enabling it to fund these deals in the first place. Everyone in the circle reports rising revenue, rising valuations, and surging share prices, but the cash is moving in circles. Thus, all this churn is just NVIDIA recycling its own money, rather than creating new value.

Echoes of the Dot-Com Bust

One parallel that’s been drawn repeatedly is that the AI capex flywheel (i.e. hyperscalers, model labs, and vendors recycling spend into more buildout) resembles the dot-com bubble, where Cisco was the “shovel seller.” Many companies with thin fundamentals surged in value before collapsing. Iconic examples include Pets.com, Webvan, and eToys, each of which went public and then failed within a short period. Boo.com never reached an IPO but still became a cautionary tale, reportedly burning roughly $135M in venture funding in around 18 months before liquidation.

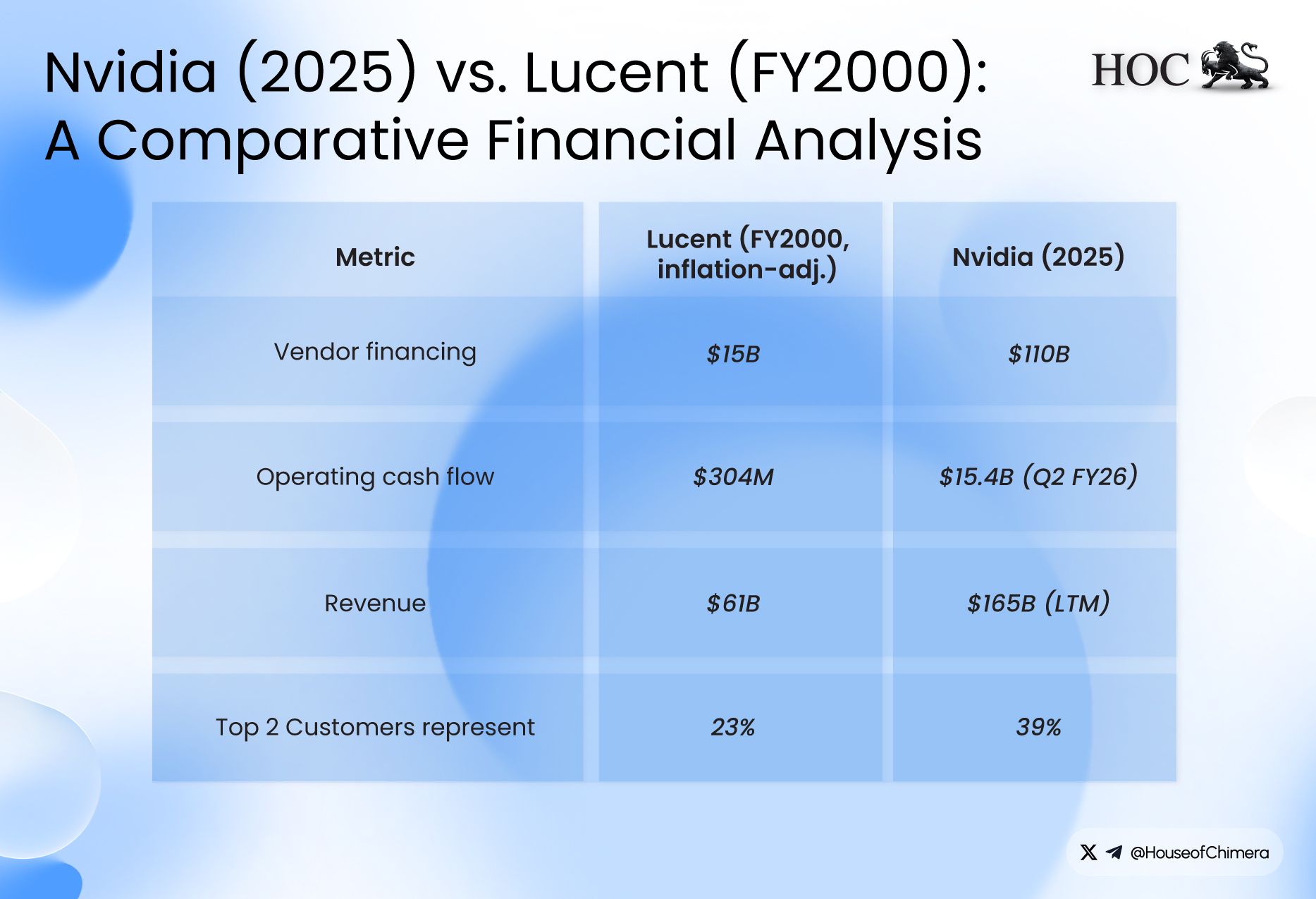

Another parallel is “vendor financing,” which can destabilize the markets. Late in the 1990s dot-com boom, hardware suppliers like Cisco made enormous profits selling networking gear to ISPs. To accelerate rollouts and drive even more Cisco purchases, Cisco also extended loans to those ISPs. When the boom broke, and the scale of overbuilt internet capacity became painfully apparent, many ISPs couldn’t repay. The QQQ fell about 70%, and, roughly twenty-five years on, Cisco’s share price still hasn’t regained its 2000 peak.

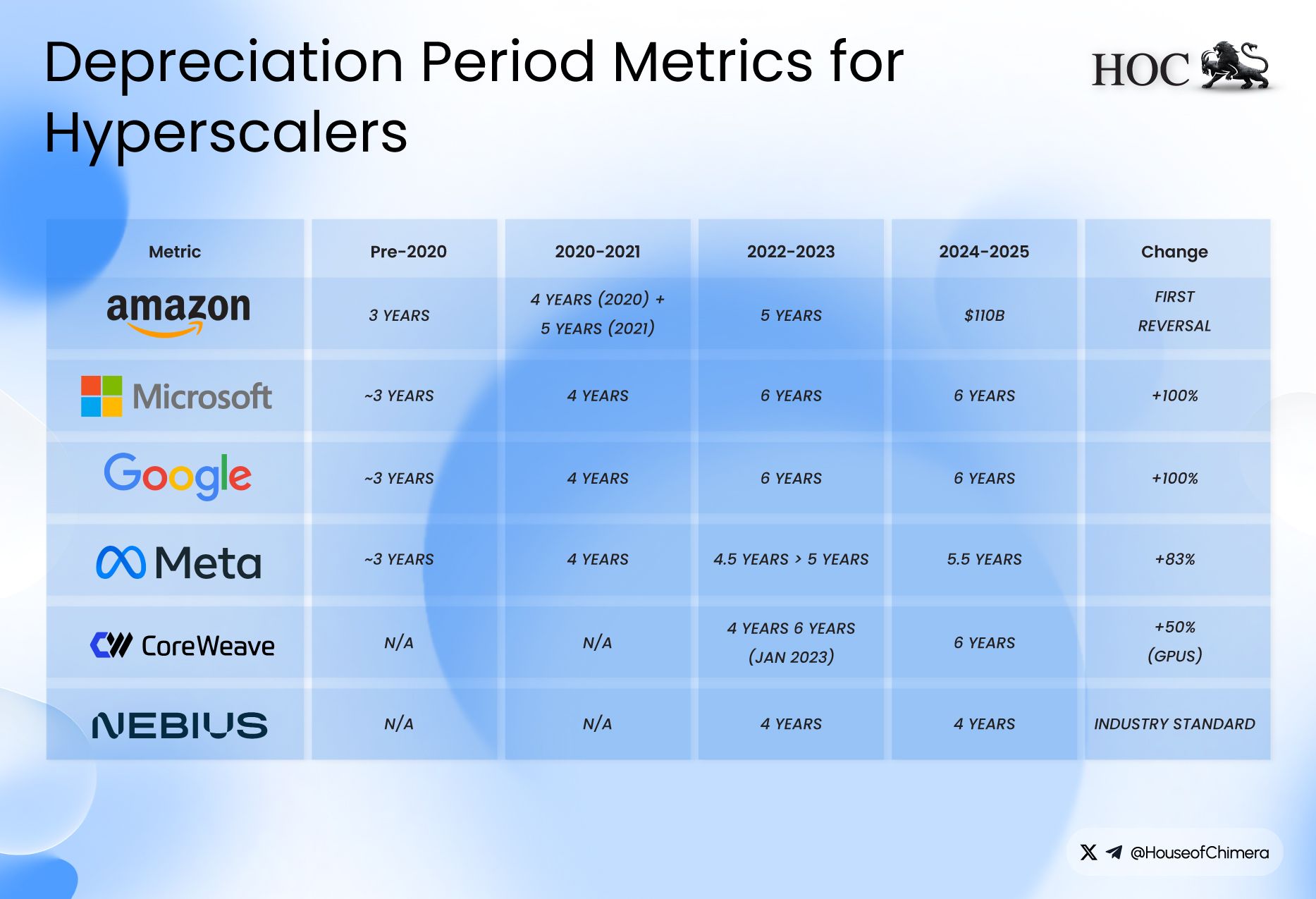

The last striking parallel is that there are concerns that the companies are using accounting tricks to make their operational cash flow appear stronger than it actually is. In the dotcom era, channel stuffing, pulling forward demand by pushing excess inventory into the distribution channel, and improperly recognizing early revenues were among the leading causes of companies to appear operational healthier than they were. In the current era, the big tech companies have all extended the expected economic life of their GPUs, going from 3 to 5 or 6 years. However, Jensen Huang said that ‘when Blackwell starts shipping in volume, you couldn't give Hoppers away’ and ‘There are circumstances where Hopper is fine," he added. "Not many." This raises the question: Is it valid to significantly extend the economic life of these GPUs?

If the “real” asset lifespan is closer to 2–3 years, a plausible assumption given the pace of computing advances, that could shave roughly 5–10% off these companies’ EPS. If Jensen’s expectations are correct, that’s as much as $4T, or nearly one-third of their current combined market cap. However, several experts have weighed in, suggesting there may actually be a use case for these older, “dusty” GPUs after all.

A key counterargument is that GPUs don’t become worthless just because the frontier shifts: data centers can redeploy older cards to non-training workloads, and to “good enough” inference. In practice, A100s, now roughly 5 years old, are still widely used, supporting the idea that GPU utility can persist well beyond the typical “1–3 year” narrative. On the lifecycle side, Lambda and other infrastructure operators argue GPUs can remain economically useful for ~6–8 years, especially when operators ladder them down the stack (i.e. training → inference → lighter workloads) and rely on support/warranty coverage for a large portion of that window.

A specialized AI company like Groq might see its hardware’s value tied almost exclusively to a narrow set of inference workloads, making it more susceptible to rapid obsolescence. In contrast, a hyperscaler like Google, Amazon, or Microsoft runs everything from cloud databases and video transcoding to scientific simulations and internal analytics. For them, a three-year-old H100 may not be obsolete. It can instead be redeployed to accelerate countless other tasks, delivering a significant performance uplift over traditional CPUs and generating economic value for years.

Real-world precedent also shows extended service lives on hyperscaler fleets. Azure retired its NCv2 (P100) and ND (P40) VM series on 6 September 2023, implying multi-year deployment cycles for 2016-era chips, and has announced NCv3 (V100) retirement for 30 September 2025, roughly a “many-years” lifecycle for a 2017-era GPU generation. This suggests it’s feasible to extract value from GPU assets over a materially more extended period than consensus often assumes.

The GPU Oversupply Problem

In 2001, approximately a year after the bust of the dot-com bubble, Cisco had to disclose a provision for its inventory of over $2.77 B and over 8,500 layoffs. Cisco describes excess inventory tied to a “sudden and significant decrease in demand.” Cisco's stock fell by over 75% within that year. This shouldn’t be surprising: once the rush ended, Cisco’s “shovels” were no longer in such high demand, and many of its former buyers either went bankrupt or were forced to downsize sharply just to keep operating.

The argument that older GPUs might get used for different tasks might have some truth, as it sounds logical that older equipment might still be used for lighter and more straightforward tasks, as it has done in the past. However, this time it is different: unlike 5-10 years ago, the investment in GPUs has increased steeply, raising the question of whether the market can absorb such a massive influx.

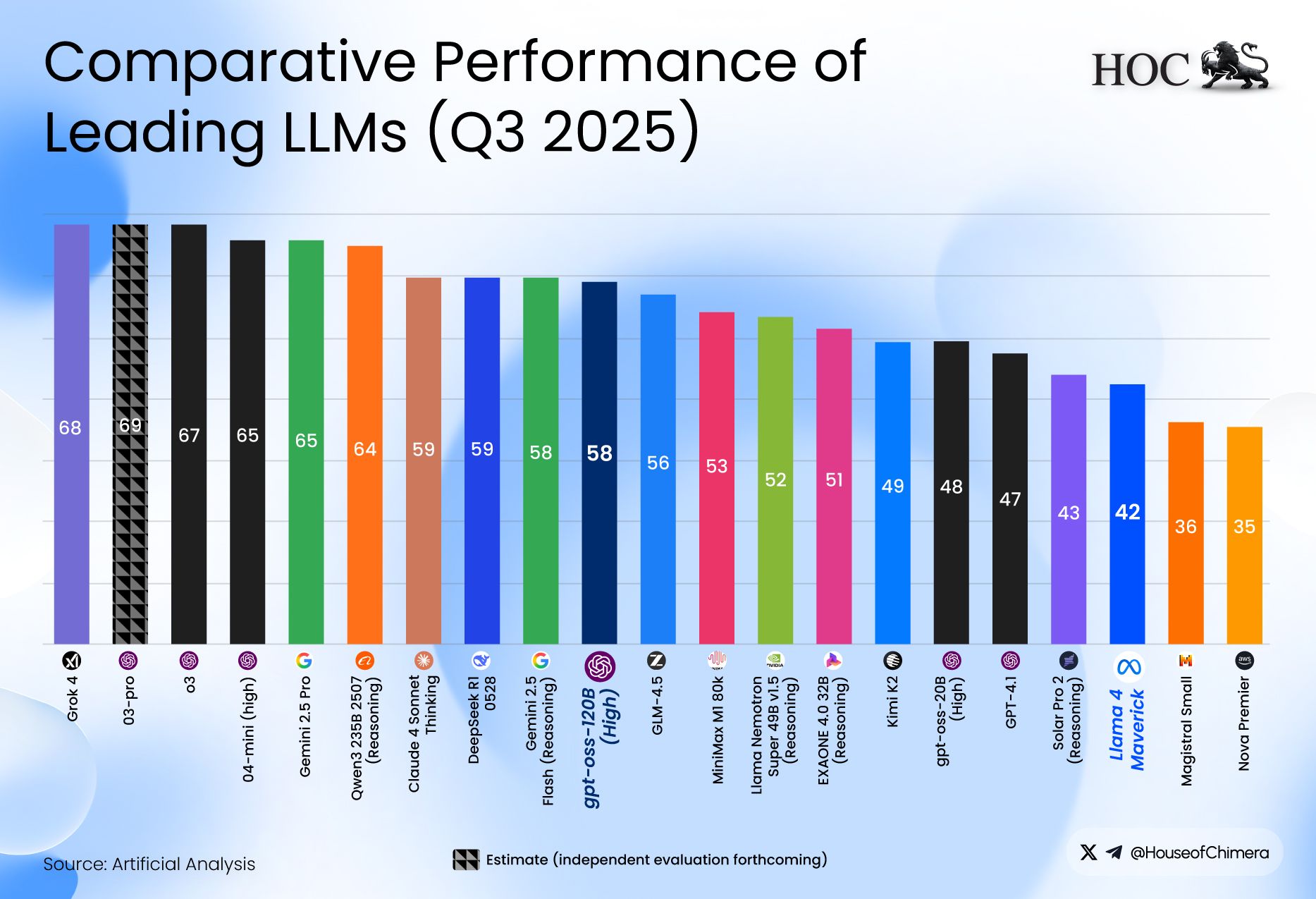

Demand for computing will continue to rise as long as investment remains elevated. However, the marginal demand driving the high-end GPU arms race is still largely tied to training and frontier-scale experimentation, not to the steady-state task of running already-trained models. That matters because many inference and non-AI workloads can be served with materially less hardware than training requires. For example, GPT-OSS-20B can deliver performance comparable to OpenAI o3-mini while running on roughly 16GB of VRAM. In practice, 16GB is a standard configuration even on newer consumer GPUs (e.g., RTX 4080-class cards). By contrast, 64GB VRAM is generally not a consumer-GPU baseline, which illustrates how quickly the “minimum viable hardware” for a large share of tasks can drop as models and runtimes become more efficient.

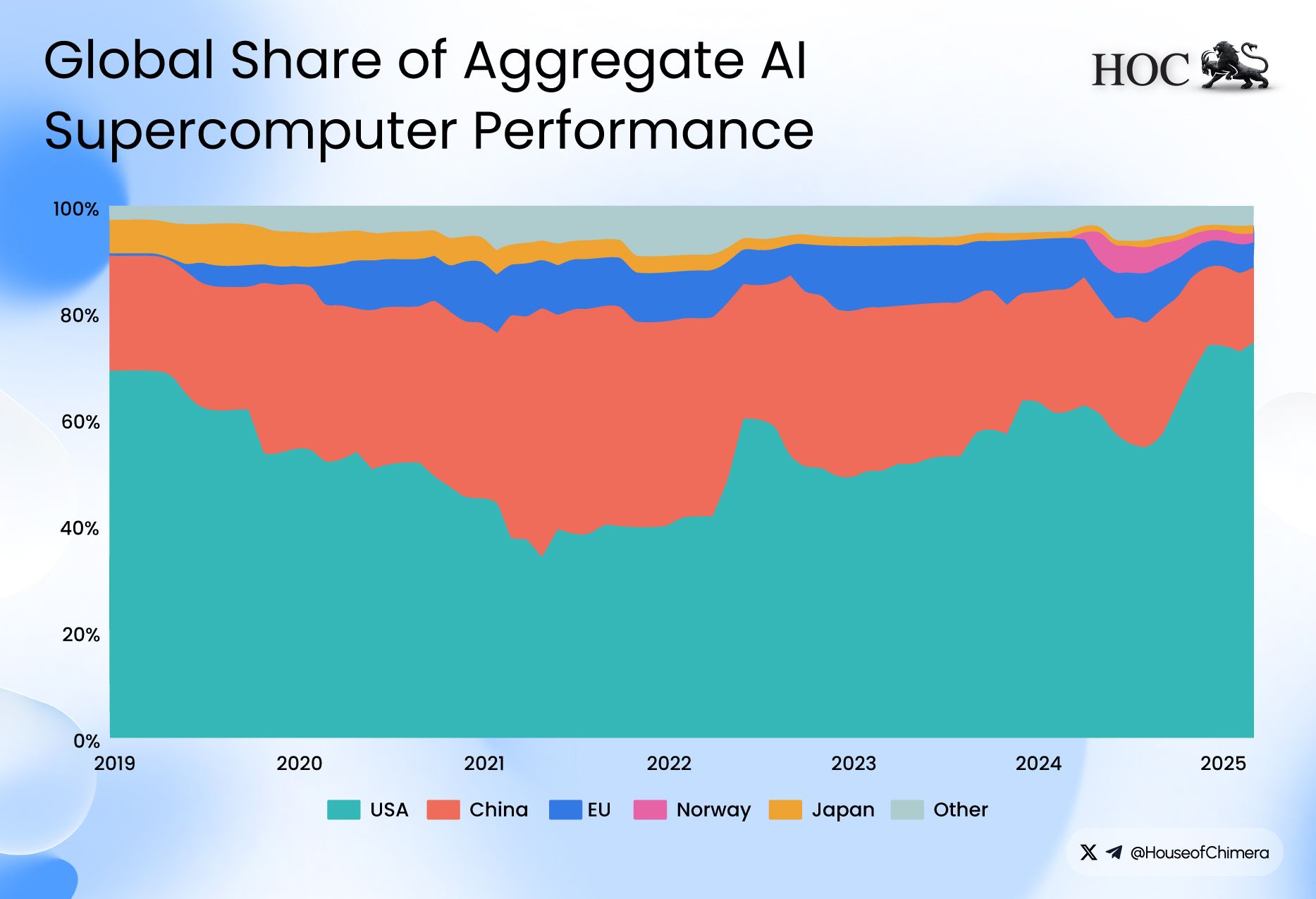

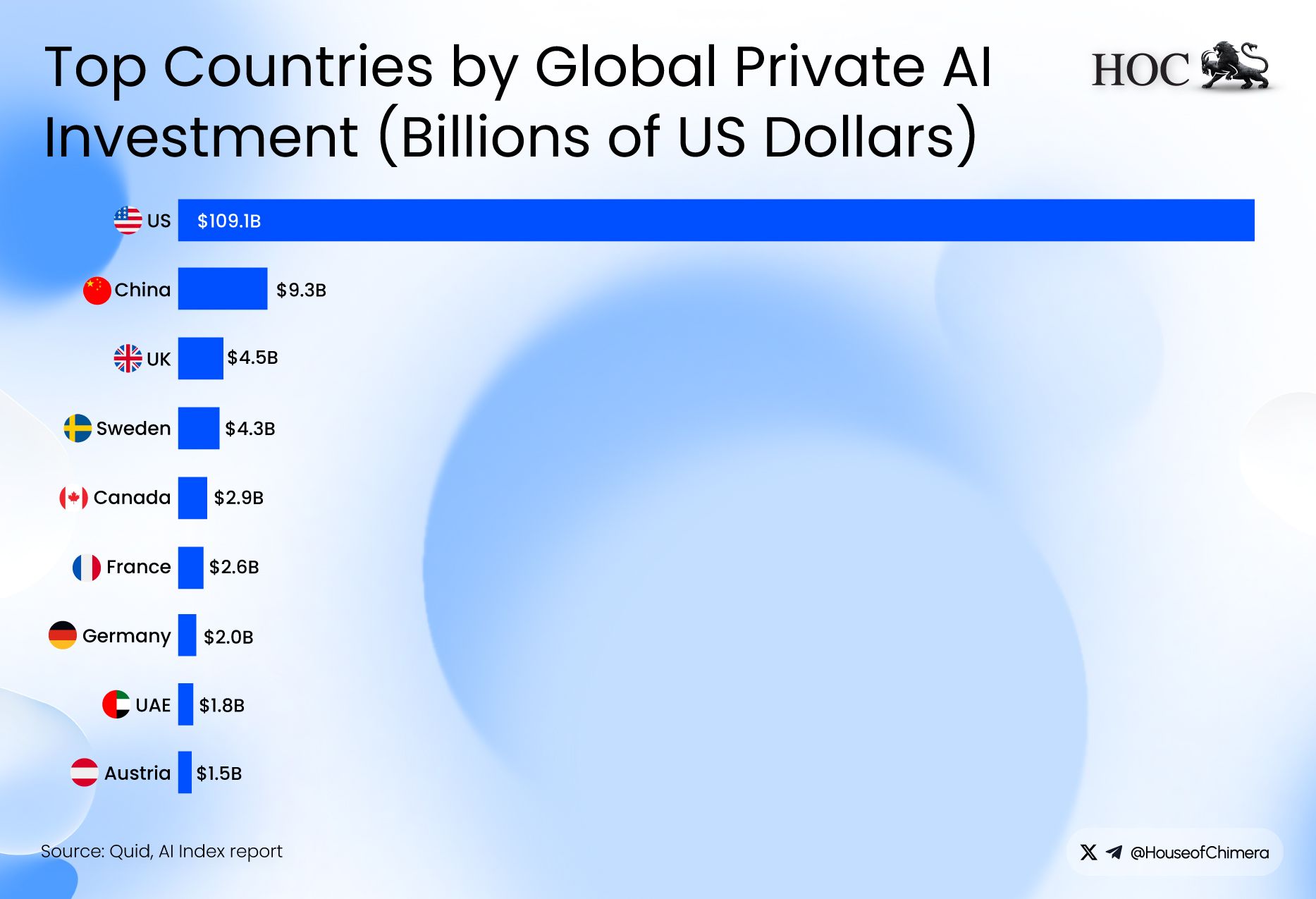

Against that backdrop, the key question is not whether older GPUs can be redeployed; they can, but whether there will be enough demand at scale to absorb them if supply increases. The U.S. has been investing heavily in AI, and a large share of global private AI capital has concentrated there, reinforcing the incentive for firms to continue upgrading in pursuit of performance and cost advantages. If next-generation GPUs deliver a step-change in capability and efficiency, incumbents in an arms race have an apparent reason to prioritize the newest hardware. The practical consequence is that older cards may be pushed down the stack, or outward into secondary markets, increasing the effective supply of “last-gen” capacity.

That’s where the absorption constraint bites. Even hyperscalers have expanded their AI offerings substantially, but demand for their broader, non-AI services does not necessarily scale in lockstep. New customers can help soak up capacity, but most entrants are not training frontier models and therefore typically do not need the same volumes of compute. In other words, demand for older GPUs exists. Still, it is uncertain whether it is sufficient to offset rapid rotation into newer generations, especially if a large wave of redeployed capacity simultaneously hits the market.

When the Music Stops

OpenAI has recently signed a $38 billion cloud computing deal with Amazon, Anthropic secures a $15 billion investment commitment from Microsoft and NVIDIA, OpenAI and Oracle signed a $300 billion USD deal, and last but not least, NVIDIA claims it will build up to $500 billion of US AI infrastructure in the next 4 years.

The metaphor of ‘everyone is dancing, until the music stops’ is adequate here. The industry is currently filled with euphoria and copium, but is being held together by private investments. These private investments allow OpenAI, Grok, and Anthropic to spend enormous amounts of capital, which mainly flows back to NVIDIA, which in turn recycles it back to these companies. As 81% of total private investment is already going towards AI, a slowdown is very likely, as the cycle feels very much artificial and irrational.

There’s a self-reinforcing loop at work: the U.S. amasses ever more compute, which enables breakthroughs (e.g., faster or better models), and those advances then spur demand for even more compute. It’s a cycle that can’t compound indefinitely; the structural break comes when one link in the chain can no longer finance the next step.

To illustrate the extent of today’s market exuberance, a handful of mega-cap names are doing a disproportionate amount of the heavy lifting across major equity markets:

- U.S. equities: NVDA (+30%), Apple (+19%), Google (+83%), Microsoft (+14%), Amazon (+11% YoY), Broadcom (+131% YoY).

- Shanghai / China complex: Tencent (+55%), Agricultural Bank of China (+77%), Alibaba (+90%), ICBC (+47%), China Construction Bank (+29%).Taiwan: the market is effectively anchored by TSMC (≈ $37T MC), with a market cap roughly 10x the second-largest name (Hon Hai Precision Industry). TSMC is +35% this year, while Delta Electronics, #3 by market cap, a major supplier of power components used across electronics, including AI-related infrastructure, is up 150%,

- Euronext: ASML (+56%), Prosus (+46%).

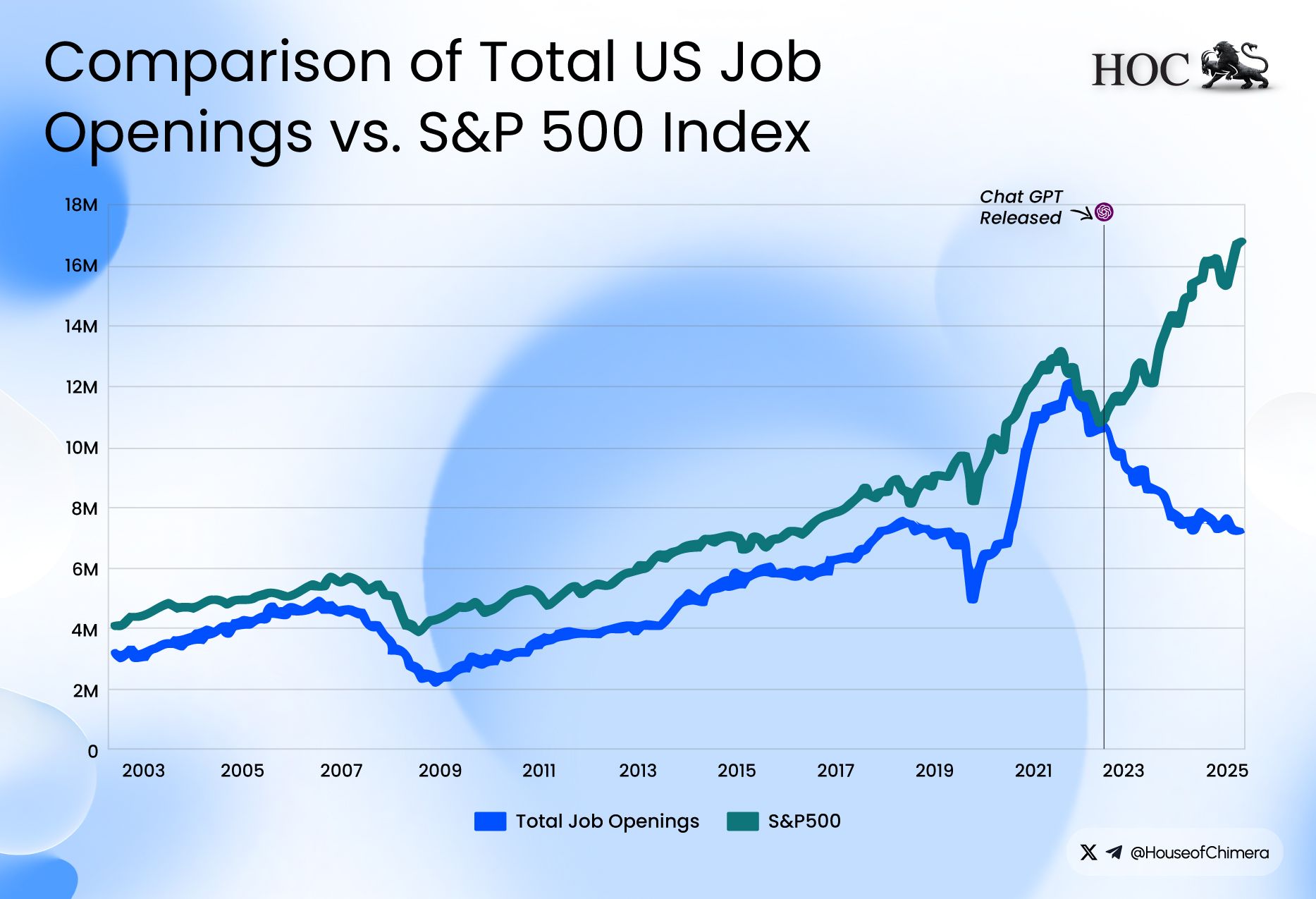

AI-related stocks have accounted for 75 percent of S&P 500 returns and 80 percent of earnings growth since ChatGPT launched in November 2022.

What will be the impact?

The discussion is not about whether AI is here to stay, but about what shape it will ultimately take. In the near term, private AI investment is likely to cool meaningfully as stock market sentiment becomes more rational and valuations gradually return to more normal levels. Some companies appear particularly stretched, with examples like Palantir trading at a price-to-earnings ratio of around 400, a level many would consider difficult to justify outside of the current AI-driven excitement.

This normalization increases pressure on AI firms that are not yet generating meaningful revenue and therefore rely heavily on continued inflows of investment to fund growth. That pressure can extend beyond the application layer as well. NVIDIA, often seen as the leading supplier powering the boom, could be affected if reduced investment leads to slower demand for GPUs. If demand softens, NVIDIA’s operational cash flow could come under pressure, which may also limit how quickly the broader AI buildout can continue and expose just how fragile parts of the current momentum may be

As a result, a major sell-off could hit the broader economy hard, especially given that roughly 80% of recent earnings growth has been driven by AI-related stocks. At the same time, several warning signs are already flashing; job openings, for instance, have been trending down, suggesting the downturn may be underway. We’ll publish a separate blog soon that takes a deeper look at the overall state of the US economy.

One direct consequence for many Americans would be pressure on their 401(k) balances during a market slump. That may not be an immediate problem for everyone, since many 401(k) strategies and target-date funds gradually shift from stocks (i.e. risk-on) toward bonds and cash equivalents (i.e. risk-off) as retirement nears. Still, even if your 401(k) isn’t explicitly AI-focused, AI-linked stocks have driven a large share of S&P 500 returns, and a decline in that segment can pull the broader index down due to high correlation.

This effect is amplified by the fact that major US indices are market-cap weighted: the biggest AI “winners” (or perceived winners) become an increasingly large part of the index. And for retirees, or anyone close to retirement, a significant drawdown right before or shortly after withdrawals begin can permanently reduce how much the portfolio can sustainably support. Target-date funds can reduce that risk by holding more bonds, but they’re not immune to a broader market shock.